How Current Metrics Are Masking The True Problem-Solving Power Of Large Language Models

Martín Marlatto

CSO at WillDom | Partner

Introduction

Are we really seeing the full power of large language models (LLMs), or are the numbers we look at hiding their true skills? Many of us judge LLMs by quick tests or short tasks, expecting clear, immediate answers. But what if these quick checks miss how well LLMs handle complex problems over longer periods?

This article dives into why the usual ways we measure LLM performance often paint an incomplete picture. We'll explore how current metrics can underestimate how well LLMs work on tasks that need deep, multi-step thinking over time. This results in a misleading idea called the illusion of diminishing returns—where bigger and better models seem to stop improving on harder tasks.

We'll look at why these common tests fall short, share recent research proving LLMs can sustain complex reasoning, address doubts people have, and suggest smarter ways to measure their true capabilities.

Understanding Evaluation Metrics for LLMs

When researchers test LLMs, they often use benchmarks focusing on how well a model answers questions, summarizes text, or translates sentences right away. For example:

- BLEU and ROUGE scores measure how closely a model's output matches a reference text, mostly in translation or summarization tasks.

- Accuracy on short question-answering (QA) datasets tells us if the model gets a single fact or answer correct.

- Benchmarks like GLUE and SuperGLUE combine several straightforward language tasks to give a general sense of a model's language understanding.

These tests are great for quick checks and comparing different models, but they mostly focus on short-term success. They don't measure how well a model can keep track of details and reasoning steps over many turns or sustain a complex task that involves planning, connecting information across paragraphs, or following a long instruction.

Imagine grading a chess player only by their first few moves in a game. It doesn't tell you if they can strategize for hours or adapt to changing situations. Current LLM metrics create a similar blind spot.

The Illusion of Diminishing Returns Explained

Here's the deal: when we test LLMs only on short, simple parts of a problem, their performance looks like it improves fast at first, then slows down or even stops improving as tasks get harder or longer. This pattern is what people call the illusion of diminishing returns.

But this is more about the test itself than the model. Since the evaluations focus only on short-term results, they miss the subtle, steady improvements LLMs make in handling long processes or multi-step reasoning.

Basically, if you only measure the first step in a complex journey, you'll never see how well someone completes the whole trip. So the "plateau" is often fake—it's a problem with what we measure, not how much the model can do.

Evidence of Underestimated Long-Horizon Capabilities

Recent studies show LLMs can actually shine on longer, multi-step tasks when tested the right way.

For example, some experiments asked models to solve detailed logic puzzles, write multi-paragraph essays, or plan complex projects step-by-step. Instead of one-off answers, the models worked through sequences of reasoning and kept track of earlier parts of their answers.

These studies found that:

- Models improved steadily as they got bigger or were given better training, even on long tasks.

- When evaluated differently—looking at the entire solution rather than just quick answers—their success rates were much higher than standard metrics suggested.

- In some cases, LLMs displayed an ability to self-correct, revise earlier steps, and keep longer context in mind better than expected.

This suggests LLMs are developing stronger internal processes for sustained thinking, not just handing out quick answers.

Counterarguments and Criticisms

Of course, there are valid reasons to be cautious:

- Maintaining coherence over long outputs is still tough. LLMs sometimes contradict themselves or forget details after many sentences.

- Some improvements might be "surface tricks." Critics argue models get better scores by using shortcuts or patterns, not true understanding.

- Testing long tasks is expensive and complicated. Running deep evaluations takes more time and resources, making it hard for many researchers or companies to try.

These concerns are important and show that long-horizon evaluation isn't perfect yet. But recognizing these challenges doesn't mean the usual short tests are enough. It just means we need better tools and approaches to get a fuller view.

Re-affirming the Argument

Despite the challenges, the big picture remains: by relying on short-term metrics, we're not appreciating how much LLMs have grown in handling complex, multi-step tasks. This narrow focus can mislead developers and funders, causing:

- Misplaced research goals chasing quick wins instead of deep reasoning.

- Less investment in the innovation needed for improving long-term model planning.

- Slower real-world adoption where sustained task performance matters most, such as coding, teaching, or legal reasoning.

If we want to unlock the true potential of LLMs, we have to use evaluation methods that look beyond instant answers and measure what models can really do over time.

Practical Recommendations

So how do we improve?

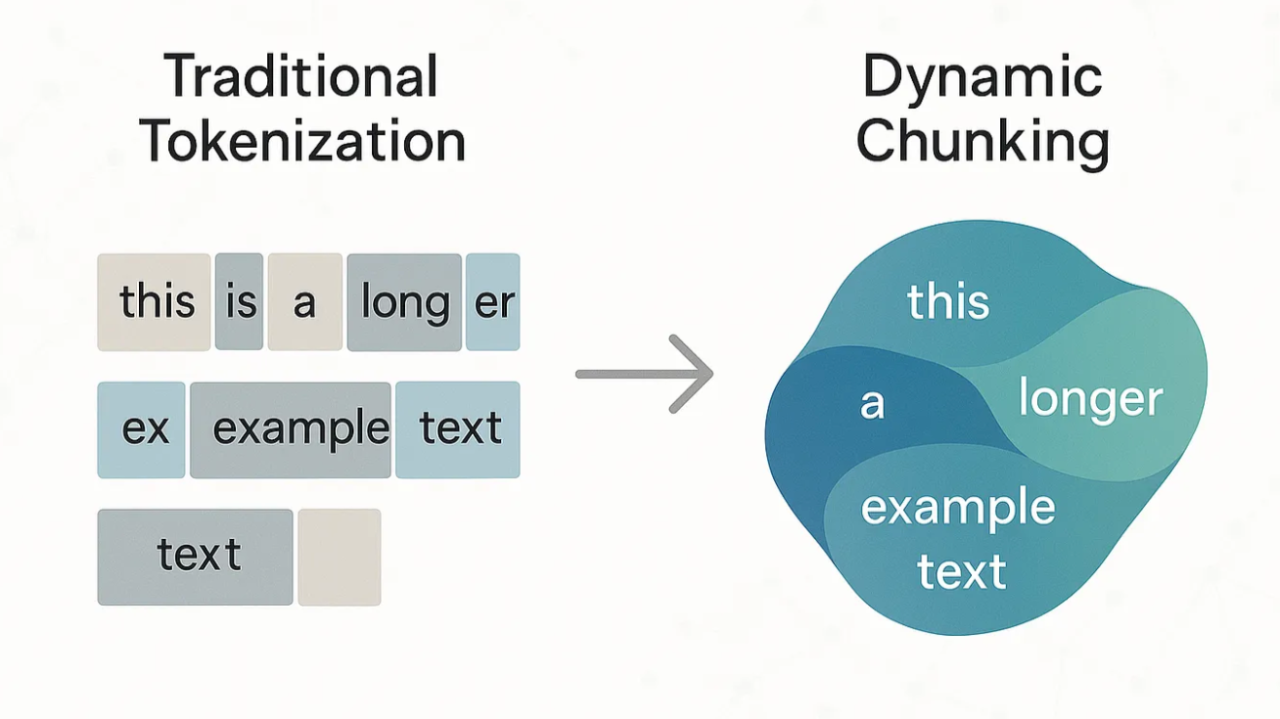

- Add multi-step reasoning benchmarks: Tests where models must chain together several logical steps or decisions, not just pick an answer.

- Use extended context tasks: Stories, projects, or conversations that span many paragraphs or turns, checking if the model keeps details and follows through.

- Test memory retention: Challenge models to recall information from earlier in the text or past interactions to see how well they remember and use context.

Collaboration between AI researchers, cognitive scientists, and metric experts can help design well-rounded evaluations that tap into how humans solve complex problems over time.

On the industry side, teams can pilot these richer metrics on real use cases—like long customer support chats, multi-stage coding tasks, or deep research assistance—to get a clearer picture of practical strengths and weaknesses.

Conclusion

Right now, we measure LLMs mainly by how well they handle quick, simple tasks. But research shows they're actually getting better at sustained, multi-step problems—skills current metrics often miss.

Imagine a future where we use richer, long-horizon evaluations to spot these hidden powers early. That would speed up innovation, direct smarter research, and help build AI assistants that really stick with us through complex tasks.

To get there, we need to change how we measure, not just aim for flashy results. Only by seeing the full picture can we unlock the true problem-solving potential of large language models.

Let's start measuring for the long haul.

Note: Written with a little help from my LLM :)

Source: arXiv:2509.09677